A Roadmap to Cloud Native Service Mesh For the Enterprise

Cloud native is the future for many organizations, which makes adopting a cloud native service mesh a critical move for the enterprise. In this blog, we break down how to transition to an enterprise service mesh that's cloud native.

- Why Cloud Native Service Mesh Is the Future

- How to Move to Cloud Native Service Mesh For the Enterprise

- What's the Hybrid Service Mesh Option For Enterprise?

- Next Steps For Your Enterprise Service Mesh

Why Cloud Native Service Mesh Is the Future

Cloud native service mesh is the future. The monolithic three-tiered web application architecture we have relied on so heavily over the past 20 years is fading into the past.

Many of these monolithic applications will persist into the future — much like the legacy applications before them. But organizations that are not prepared for this transition will find themselves shut out of cloud native — and the markets cloud native enables.

That's why it's time for the enterprise to consider a move to cloud native service mesh.

How to Move to Cloud Native Service Mesh For the Enterprise

Here are three essential steps for moving to cloud native service mesh for your enterprise.

1. Move to Serverless Architecture

The transition to lightweight serverless architectures is already well under way. The heavy JVMs with voluminous heaps are being left behind.

This transition paves the way for cloud native service mesh.

The next crucial step is interoperable microservices exposing APIs. These are defined by service registries. And they run across public and private high performance service meshes.

2. Move Your Monolith to Microservices

The monolith and cloud native service mesh will not play well together. As more industry leaders make the move to a service mesh infrastructure, your organization will need to make that change.

The good news is, you can get there from here. With a little bit of planning, you can support your legacy monolithic web applications — while simultaneously migrating to microservices and a cloud native service mesh.

3. Use a Staged Implementation and Hybridize

Using a staged implementation will enable your organization to pick and choose when and how you migrate your services and applications to this cloud native solution.

For example, you might start by going from a monolithic environment to a monolithic/mesh hybrid. Ultimately, you'll go to a holistic enterprise service mesh.

Get Help Transitioning to a Cloud Native Service Mesh

OpenLogic experts can help you transition to cloud native service mesh. Talk to an expert today to learn how.

What's the Hybrid Service Mesh Option For Enterprise?

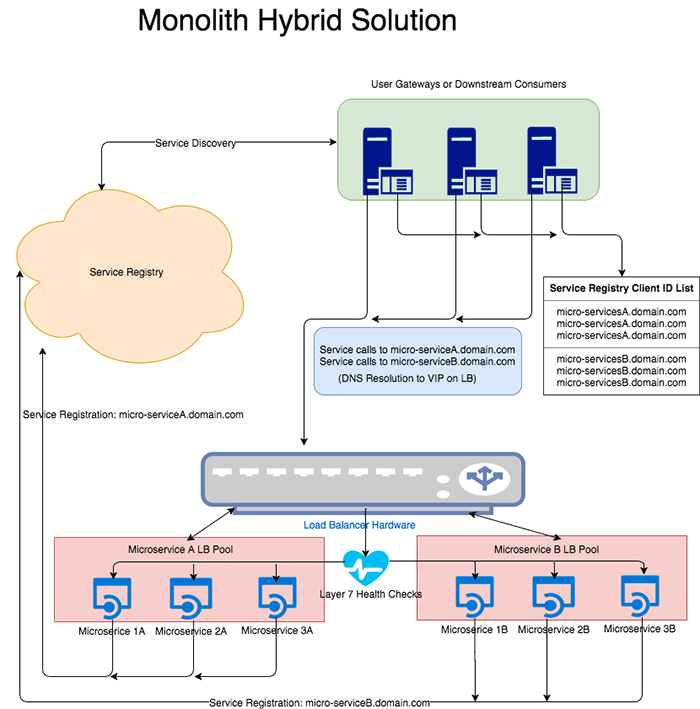

Here's what your hybrid enterprise service mesh option looks like.

The goal of your organization is to:

- Stand up the basic technologies that are required for an enterprise service mesh to operate.

- Integrate them into your monolithic architecture without affecting your existing applications.

This allows your organization to start porting your services and applications from the monolith to the cloud native service mesh. And you can do this within your current development lifecycles while maintaining current service levels.