Blog

December 20, 2018

It's difficult to practice DevOps with a monolithic application. Here, we break down how to move a monolithic application to Kubernetes with microservices.

Back to topWhy Move a Monolithic Application to Kubernetes?

I recently visited a customer in Europe for a three-day summit with their architecture team to tackle a common thread that we’ve been seeing across our OpenLogic team.

The customer had a monolithic application and the team determined that they couldn’t practice DevOps and use Kubernetes with a monolithic application. Practicing DevOps, however, was going to make everyone’s lives better.

Their executive team was investing in the development of their product, and there was a strong alignment on DevOps being the future. The architectural team had already made some excellent choices:

- Kubernetes for orchestration.

- Jenkins for CI/CD.

- An emphasis on Enterprise Integration Patterns.

This team is a prime example of the team that wants it all. They want the tracing, they want the dashboarding, they want the cool robot laser arm, and they’re lined up for the upgrade at the cyber clinic yesterday, credit chit in hand.

However, nobody’s ready for the uncomfortable questions about how the cool robot laser arm affects your love life, though. In other words, cultural and business buy-in are extremely important, especially on exactly how that monolith gets transformed into a microservice.

Back to topHow to Move a Monolithic Application to Kubernetes

Here's how to move a monolithic application to Kubernetes.

1. Know What Workloads to Move

Some workloads just aren’t ready to move to orchestrated containerization in Kubernetes.

Hard and fast: production RDBMS doesn’t belong in a container, and integration doesn’t mean ops, it means dev. Do not believe your 128GB, 64-thread Oracle workload is going to don its Thompson EyePhones and Johnny Mnemonic its way into production readiness. If your workload cannot handle disappearing without a trace, it is not a Kubernetes workload.

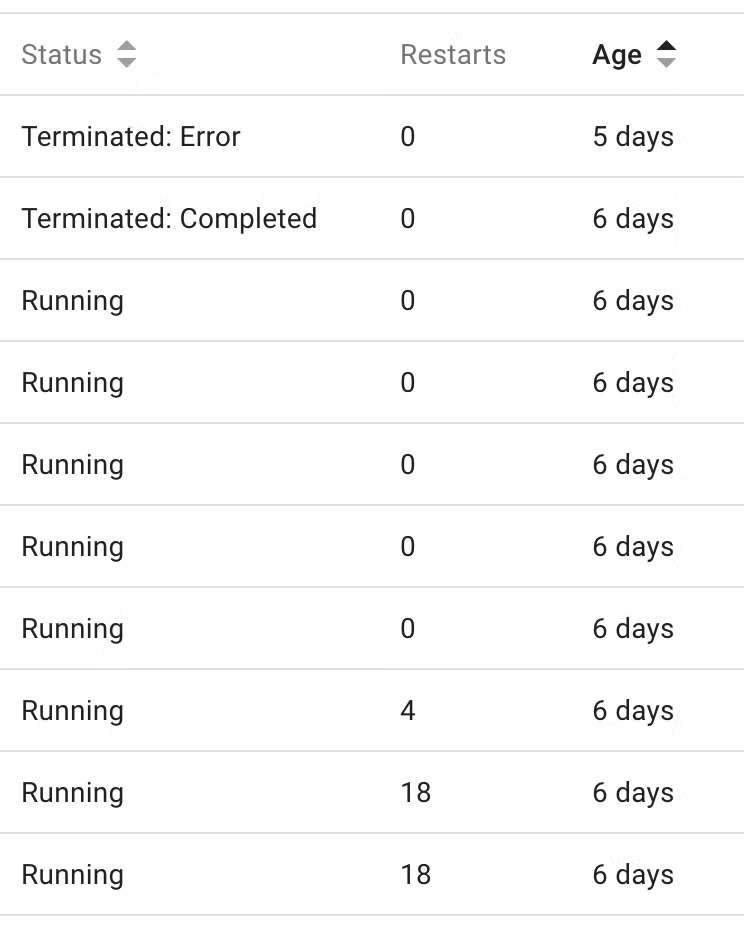

Let’s take a look at a screenshot of the Kubernetes dashboard, and look at a workload that’s been running for a while.

Figure 1: The Kubernetes Dashboard, highlighting status, uptime, and restarts metrics

Yes, “restarts” is a real metric. And yes, it will tick up. No, it’s not always graceful.

Ask your Oracle ops team the current uptime on your pet database. Observe the grand, venerable status of the months of uptime this represents. Ask them to pull the power out of the back to reboot it, just for kicks, not as a Disaster Recovery exercise. This might illustrate why that, just because you can, doesn’t mean you should.

2. Identify What to Put in a Container

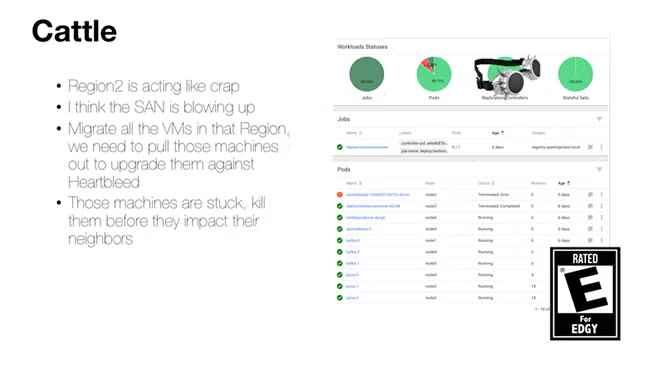

This is what’s known as the pets versus cattle problem in cloud computing. Let’s pull out some slides that I’ve specially annotated for this cyberpunk special edition. Let’s illustrate that just because you can, doesn’t mean you should.

The pets versus cattle problem is summed up as “only put survivable workloads in containers.”

While an engineer’s first thoughts might be that only “throwaway” workloads such as batch workers can make their way into Kubernetes. What I’m referring to here is multi-master, highly-available workloads that can negotiate the joining and leaving of a cluster.

Cassandra, ElasticSearch, and Kafka are examples of applications with these properties. They are all high performance, IO-intensive workloads that work great in production Kubernetes because they can survive reboots, and gracefully handle joining and rejoining. They understand concepts of idempotence and high availability. And they work with products like Zookeeper or their own cluster management to ensure that everything keeps moving along gracefully as nodes come and go.

This doesn’t mean your workload needs necessarily to be elastic, but it does need to be survivable, ephemeral, transaction-aware, idempotent, and most importantly, well understood on your team. This is why integration will not mean ops, it will mean re-architecting your entire suite of applications.

3. Identify What Not to Put in a Container

An example of an application that doesn’t belong in a container? Strangely, while there exists a Wildfly Docker image, the Java application your team has written for Wildfly may not be ready. If your team hasn’t done its homework on slimming down your image for speedy restarts, and really understands how things like session information is being replicated across your nodes, you might be able to use operations techniques like configuration to move your app into the Docker container.

However, if your application anything like the majority of the Wildfly applications we’ve supported in the past, it's very stateful, highly monolithic, and is more active/passive than ready to be load-balanced (active/active).

Back to topMove Your Monolithic Application to Kubernetes

Moving your monolithic application to Kubernetes can be tricky. Enlist the help of OpenLogic experts today. Our experts are well-versed in Kubernetes and microservices. They can help you do the migration — and provide support for your microservices journey.

Get in touch with our Kubernetes experts today to learn how we can help you migrate.