Blog

July 28, 2022

Memory configuration for Apache Tomcat can be tricky. In this beginner's guide, we look at the causes behind common Tomcat memory issues then discuss how to get started configuring Tomcat memory.

Back to topWhat Causes Tomcat Memory Problems?

There are several scenarios which can cause Tomcat memory issues, from simply not having enough heap size for your JVM, to more complicated issues like memory leaks that can exhaust the heap space and create OutOfMemoryErrors (OOME). While Tomcat 7 did introduce some out of memory protections for developers, it is still possible to run out of heap space in certain instances.

These instances can include running out of system resources, JVM misconfiguration, or poor coding practices. The key to troubleshooting OOME conditions is understanding why the OOME condition is reached. When an OOME condition occurs the JVM will print out an error message to the standard outn log file detailing why the OOME condition. For instance:

Exception in thread "main" java.lang.OutOfMemoryError: Java heap space

at Heap.main(Heap.java:11)

The fix for a given OOME condition will be dependent on why the heap ran out of space. There are several OOME conditions that may be reported here, and we will cover in a different post.

JVM Memory Pool

The JVM Memory Pool is the total memory utilized by Java for an application. This includes both Heap and Non-Heap memory. The amount of space needed for the memory pool will vary from application to application. Having a good application profile is key to determining what the appropriate sizing is.

Things to consider when profiling the app include how much data the app retains while running, how many simultaneous users the application will be supporting, and how the application is performing under load. I.e., Is the JVM slow to startup? Or is it performing a lot of garbage collection events?

Hardware

Not having enough physical memory, or having bad memory, can be a very common memory issue. Luckily it is fairly simple to identify and fix. In cases where there is damaged or bad physical memory, this will be noted in your operating system logging, along with logging about low memory conditions identified by the operating system.

Keep in mind different operating systems handle memory differently and have different recommendations and requirements. For instance, some versions of Windows Server 2022 and Linux RedHat 8 have a 2 GB recommended minimum amount of memory, while other distributions like Arch Linux can operate just fine with as little as 512MB. So when considering physical memory requirements, the OS requirements should be taken into consideration as well.

Thread Stack Size

The Thread Stack in Java is a native private stack that stores call stack data about what methods the stack has called to reach its current point of execution. Each thread running in the JVM has its own thread stack. In most Java implementations the default thread stack size is usually about 1MB. However this setting is implementation dependent, so checking the documentation of the Java implementation is required to identify the default stack size for any given Java implementation.

For most applications the default Thread Stack size for a given Java implementation will work just fine. However, for some applications, tuning the stack size might be beneficial for performance reasons. Using the -Xss java argument we can set the thread stack size to be something more suitable to the application. This may even mean decreasing the stack size from the default depending on application requirements.

Heap Size

Heap Space in Java is the memory area utilized by the JVM to store objects instantiated by applications, it’s the primary memory space utilized by the Java application. If the heap memory is too small for a given application, you will generally OOME with the "out of heap space error" listed in the logs.

The -Xmx and -Xms Java arguments are used to increase heap size. It's recommended to set these values to be the same so the heap size isn’t contracting and expanding during the life cycle of the JVM — as resizing takes up overhead and can decrease overall performance.

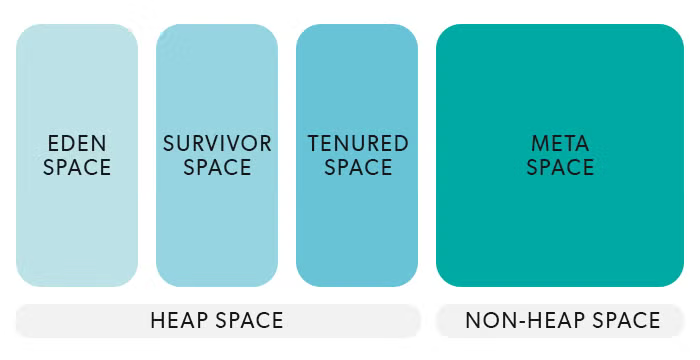

Heap size is broken into several areas as shown in the following diagram:

New objects are placed into the Eden space and are eventually graduated into the Survivor space. After surviving one or more garbage collections, the objects are eventually Tenured. This behavior can be tuned, with several different garbage collection algorithms that govern the behavior of this part of the heap space. (Going in-depth on garbage collection could be a blog post all of its own.)

Metaspace

Starting in Java 8, PermGen space was replaced by Metaspace. This native memory space is used to store class metadata and is considered non-heap space. Metaspace, by default, grows automatically and can be a considerable part of the total memory utilized by a Java application.

Metadata consists of basically any information the JVM needs to run a class. Metadata is only unloaded when the class itself is unloaded, and in some cases there might be a call to manage this space a little more tightly than default behavior.

MetaspaceSize and MaxMetaspaceSize can be used to set the upper bounds of the Metaspace area. MinMetaspaceFreeRatio is the minimum percentage of class metadata capacity free after garbage collection, while MaxMetaspaceFreeRatio is the maximum percentage of class metadata capacity free after a garbage collection to avoid a reduction in the amount of space.

See More Tomcat Best Practices

In our Enterprise Guide to Apache Tomcat, our experts look at the top best practices for teams deploying Tomcat in enterprise use cases, with best practices for security, performance, resilience, and more.

Back to top

How to Configure Tomcat Memory Settings

Configuring Tomcat memory settings is done via Java arguments pass at run time. One challenge of tuning Tomcat memory settings is that we need to use fact-based evidence gathering and continual performance testing to validate our changes and findings. That makes building an accurate application profile using a load testing environment a must. One pitfall a lot of organizations fall into is over-tuning their Tomcat environment and introducing errors that did not exist before.

1. Create an Accurate Application Profile

Using JMX tools to track performance metrics like total heap size, garbage collection times, JVM throughput statistics etc. should be done in a performance and load testing environment before moving changes into production.

2. Make One Change at a Time

After creating an accurate application profile, introduce changes to the Tomcat JVM one at a time and test each change. These changes should be done in a performance and load testing environment. Introducing too many changes at once can create a confusing process when moving these changes into production. Changes that haven’t been proven to provide a demonstrated performance gain should not be migrated into your production environment.

3. Test and Re-Test

Once changes have been migrated into production you should revalidate these changes via performance metric tracking. This is an on-going process and should be part of your standard dev-op and testing processes.

Back to topFinal Thoughts

We've covered a lot of the basics for Tomcat memory configuration in this blog, but, as noted above, this isn't a complete guide. Advanced Tomcat memory configuration requires a deeper understanding of how utilities within the JVM work (e.g., garbage collection).

That said, if you follow the basic best practices we've laid out above, you will have a better configured Tomcat deployment than many who let it run with out-of-the-box configurations.

Need Support for Your Tomcat Deployments?

With OpenLogic support for Apache Tomcat, you receive around the clock support and guidance for your Tomcat deployments. Ready to see what we offer? Click the link below to get started.

Additional Resources

- Resource Collection - Tomcat Overview

- Blog - A Guide to Application Logging in Tomcat

- Blog - How to Install Apache Tomcat

- Blog - Preparing for Your Next Tomcat Upgrade

- Blog - 5 Apache Tomcat Performance Best Practices

- Blog - Apache Tomcat Clustering: The Ultimate Guide

- Blog - Apache Tomcat Security Best Practices

- Blog - Tomcat 9 Overview: Key Features and Considerations