Blog

August 14, 2020

The Apache Camel framework, with its ability to handle complex aggregations, automatic error handling, and reduce boilerplate code used in complex integrations, is a top choice among open source middleware technologies.

In this blog, we give an overview of Apache Camel, including how it works and its benefits. Our expert also provides Apache Camel example use cases, and explains how to apply the Camel framework in your stack and get Apache Camel support.

Back to topWhat Is Apache Camel?

Apache Camel is an open source framework for message-oriented middleware.

Apache Camel is the industry standard for reducing boilerplate code for complex integrations — while maintaining features like:

- Automatic error handling

- Redelivery policies.

- The ability to handle complex aggregations.

Camel is a domain-specific language that’s an implementation of the enterprise integration patterns. It helps organizations solve some very specific problems.

Back to topApache Camel Benefits

There are many benefits to using Apache Camel. One of the biggest benefits is that the testing of the Apache Camel framework is outsourced to the community.

For example, the Apache Camel framework is based on Spring. If you’re utilizing Spring data to consume data from a Postgres database, the only business logic your team is responsible for writing a test harness for is in your implementation of the Spring framework. Your development team isn’t writing unit tests to validate that Spring Data returns rows and columns as it should from the JDBC datasource, or any of the underlying technology that that framework is built off of. JNDI, the JVM, the Linux Kernel.

There’s a lot that goes into a stack. And Apache Camel is the best choice to handle complexities of the code for handling the routing, transformation, scheduling, error handing, redelivery, and aggregation of batches and streams of messages.

Introducing Apache Camel to New Developers

There’s a little exercise I like to do when introducing Apache Camel to new developers and development managers. I ask about a complex integration problem that the enterprise is currently facing or has written a solution around in the past.

For example, I've heard about the output of a timekeeping system disconnected from an API in a remote site like a factory that needs to be consumed to run payroll. Shell scripts automate the movement of this file via FTP. Certain columns need to be dropped for privacy, maybe because of GDPR or State of California privacy requirements regarding PII.

There are sed, awk, and complicated, hard-to-test, easy-to-fail transformations that eventually connect to another integration, such as curling against a real API into the main NetSuite system for payroll.

Then, I ask about how many total lines of code are used to create the solution. Usually. it takes 100s or even 1,000s of lines of code if there’s error handling, complex transformations, routing, and aggregation.

Apache Camel can handle even the most complex of scenarios in 10, maybe 20 lines of code.

Back to topApache Camel Framework and Components

Apache Camel can handle complexity because it is an amazing component-driven, message-oriented routing, and normalization framework. Apache Camel's framework is based on Spring. So, you can program Apache Camel “routes” as XML, Scala, and — my favorite — fluent-style Java code.

Apache Camel provides an inversion of control (IoC) approach to data routing. This approach allows for a seamless transition of messaging data between a wide variety of integration components.

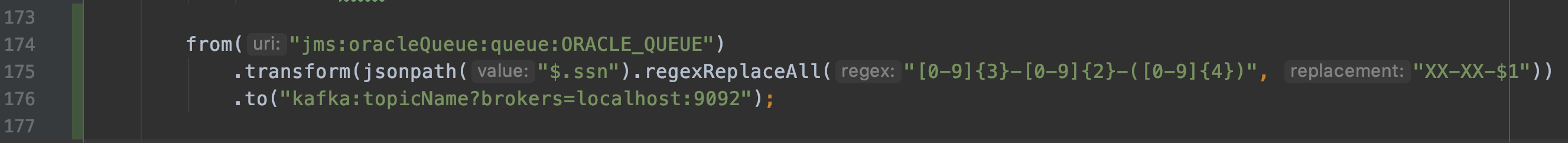

This variety can mean 100s of well-tested components. Consuming from OracleMQ, stripping a sensitive field like SSN from the JSON, and placing those messages onto a Kafka queue? Three lines of code.

Here's how it looks in the Apache Camel framework:

The first line consumes messages from JMS, like ActiveMQ. The second uses JsonPath to pull the SSN from the record and replaces all SSN patterns with XXX-XXX-1234. The third line places the transformed message onto a Kafka topic.

Back to topHow Apache Camel Works

Back to topApache Camel's URI-based routing methodology allows for the composition of message consumers and producers at runtime based on config flags and environment variables. A Docker container can be written to dynamically route messages to and from locations based on operator need.

Apache Camel Example

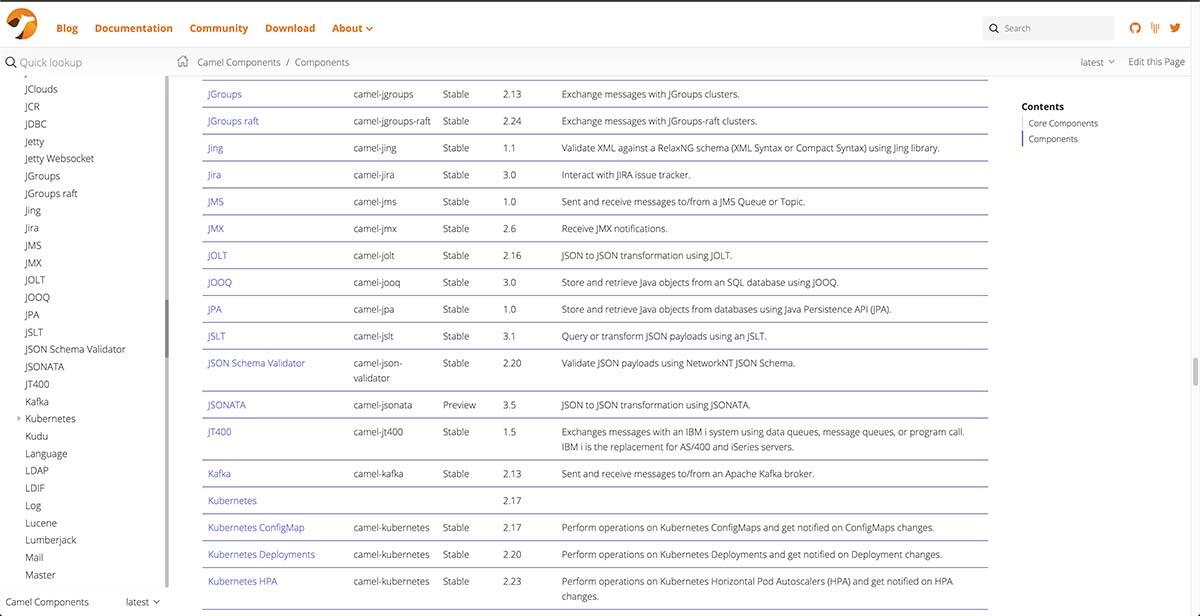

A relevant Apache Camel example: I recently worked an architecture out with a financial institution where we would utilize Camel as a cron runner, with a definitive “finished” state, and allow Kubernetes to schedule it. Their use case was log shipping from a black box system to ElasticSearch and Kibana for reporting. But there are a ton of integrations for Camel — including over 333 stable ones.

Is the Apache Camel Framework Still Relevant?

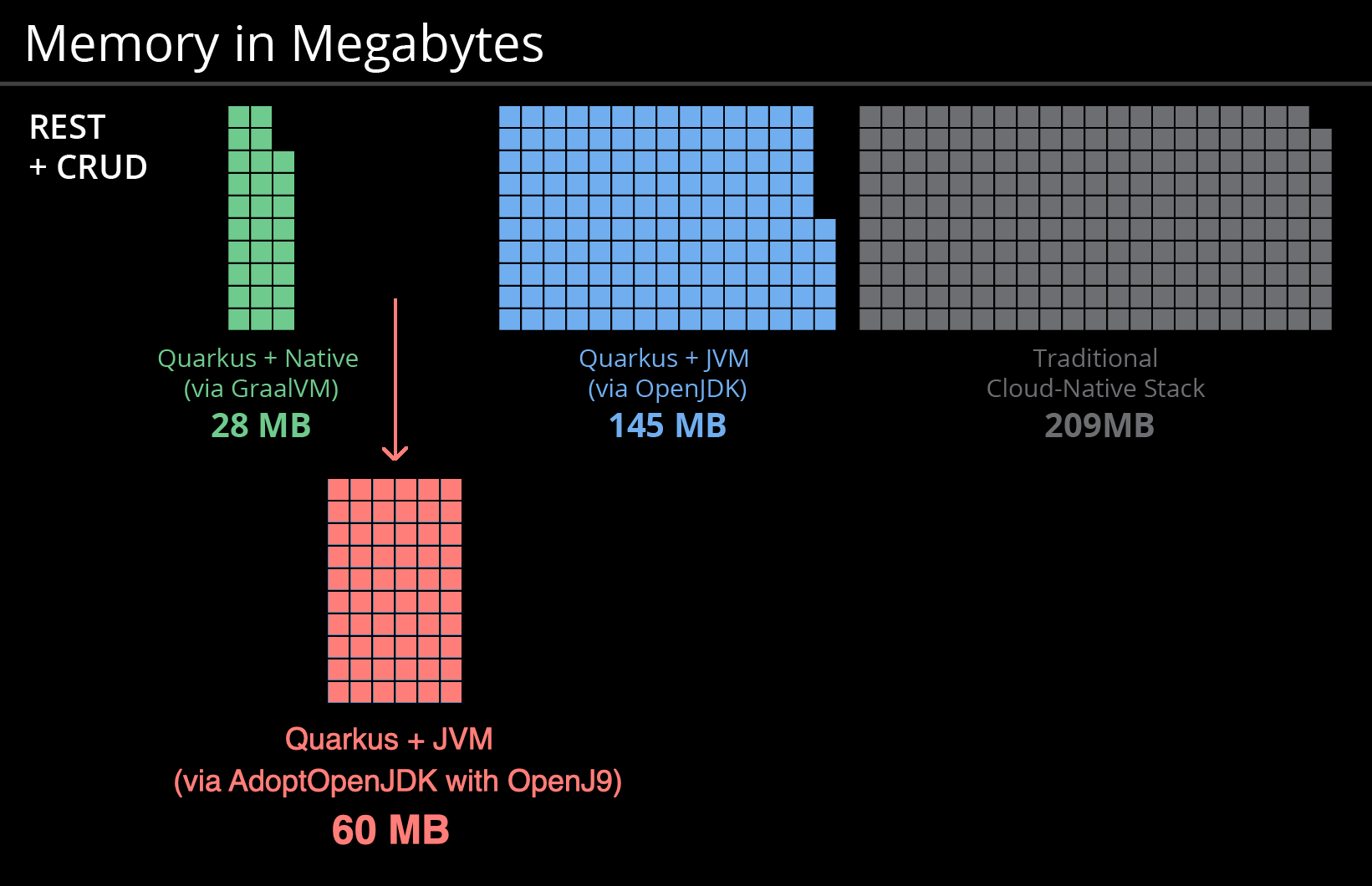

Absolutely. Apache Camel works great in modern DevOps environments that rely on containers and Kubernetes. Apache Camel is compatible with GraalVM and Quarkus, which means it can create native images that utilize 10% of the same memory of a traditional cloud stack. This is game-changing for distributed cloud applications.

Why Use Apache Camel?

Apache Camel makes it simple and scalable to attach hundreds of different heterogeneous service endpoints in an efficient way. Its component-driven approach focuses on the reduction of boilerplate code while writing integration logic.

Simply put, when you use the Camel framework, you spend more time writing code that matters to the enterprise.

Back to topGet Apache Camel Support

There are many benefits to using Apache Camel. But making the Camel framework work for you, especially if you're part of a large organization, can be tricky. That's why it's best to partner with the experts for Apache Camel support.

OpenLogic offers Apache Camel training, consultative Apache Camel support, and so much more.

Our Camel experts can help you with:

- Boilerplate reduction

- Dynamic routing

- Messaging resiliency

- Integration patterns

- Security