Blog

July 8, 2021

For organizations who want to simplify PostgreSQL clustering, Patroni is a popular option. In this blog, we look at the PostgreSQL Patroni combination, how it works, how to configure it, and commands and tips to help you along the way.

- What Is Patroni?

- How Does Patroni Work?

- Installing Patroni on CentOS

- Patroni Configuration

- Patroni PostgreSQL Configuration

- Patroni Commands

- Patroni Replication Modes

- Patroni REST API

- Final Thoughts

What Is PostgreSQL Patroni?

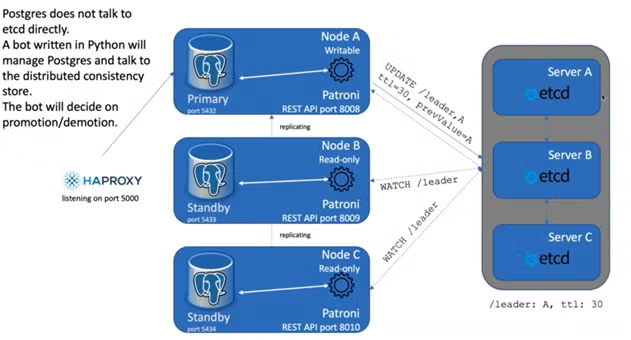

Patroni is a Python-based open-source PostgreSQL template and controller, in which you can run high-availability Postgres clusters. Patroni is technically a Python daemon, in which single Python daemons manage single PostgreSQL instances. It operates on the same system as PostgreSQL and was created out of a need for an automatic failure option for Postgres, while it leverages the permissive MIT license.

Patroni uses a distributed configuration store to track which Postgres instance is the master. Likewise, it can be configured to work with Zookeeper, etcd, or Consul configuration stores.

PostgreSQL Patroni implementations can simplify the overall cluster management lifecycle significantly.

Back to topHow Does Patroni Work?

Approximately every 10 seconds, Patroni checks every Postgres node in the cluster for the presence of an existing primary in the Distributed Configuration Store (DCS). If no primary is found, it inserts a key in the DCS to claim the primary location. If this server happens to be the primary, it informs HAProxy to use this as the new master. On the other hand, if a primary is found, it performs a variety of checks and attempts to transform the current server into a replica version. It’s truly that simple.

Patroni Example

Node B will then start replicating from Node C. And then when HAPROXY hits each nodes’ REST API ports, it will figure out that node A is no longer available, and which of the remaining nodes is the leader. /

Back to topGet Support for PostgreSQL

No matter your technical challenge or needs, OpenLogic can provide a solution. With support for over 450 open source packages, we can help you with all things PostgreSQL.

Installing PostgreSQL Patroni on CentOS

Patroni is relatively straightforward to install. Here’s a quick step-by-step guide:

- Install the Extra Packages for Enterprise Linux repository

- Install wget, git, etcd, and Package Index for Python, curl, and the JSON processor

- Install PostgreSQL 13

- Upgrade the package manager for Python

- Install the PostgreSQL adapter for Python

Patroni Configuration

Configuration is also relatively straightforward with Patroni. After you have installed Patroni, the bin_dir (bin directory) of the potgres0.yml, postgres1.yml, and postgres2.yml will need to be adjusted to the Postgres server installation in the following manner:

- listen: 127.0.0.1:5432

- connect_address: 127.0.0.1:5432

- data_dir: data/postgressql0

- bin_dir: /usr/pgsql-13/bin

PostgreSQL Patroni Configuration

While the general installation and configuration process is relatively easy, there are a few nuances you will need to be aware of in order to keep Patroni functioning properly. There are three configuration types including dynamic, local, and environment configuration. We walk through each configuration in detail below.

Dynamic Configuration

Dynamic configuration (etcd) is the global configuration of the cluster. When you change a value in etcd, you are effectively altering the configuration of every PostgreSQL node in the cluster in a global fashion. Changes are applied asynchronously during the next available wake cycle. Here are some of the common configuration settings in etcd that can be changed based on cluster-wide needs:

- max_connection (100)

- max_locks_per_transaction (64)

- max_worked_process (8)

- max_prepared_transactions (0)

- wal_level (hot_standby)

- wal_log_hints (on)

- track_commit_timestamp (off)

Local Configuration

Your local configuration (patroni.yml) will override anything set in a cluster-wide dynamic configuration (etcd). You can apply local changes using patronictl reload or by sending a POST / reload REST API request.

Environment Configuration

If you are running Postgres in containers, environment configurations can be useful in allowing you to override local configurations. If you are not running Postgres in a containerized environment, you likely will not need to worry about the environment configurations.

Back to topPatroni Commands

There are a variety of Patroni commands you may leverage on a regular basis. Here are some of the most important:

| Patroni Command | Description |

|---|---|

| Patronictl.py -c postgres0.yml show-config batman | Show the cluster-wide configuration. |

| Patronictl.py -c postgres0.yml failover batman | Allows the user to perform manual failover when there are no healthy nodes, but at the same time it will not allow you to schedule a switchover. |

| Patronictl.py -c postgres0.yml switchover batman | Opposite of a failover. Only works when the cluster is healthy and allows to schedule a switchover at a given time. |

| loop_wait | Shows the maximum number of seconds a replica will notice a missing master. |

| maximum_lag_on_failover | The maximum bytes a follower may lag to be able to participate in leader election. |

| retry_timeout | Timeout for DCS and PostgreSQL operation retries. DCS or network issues shorter than this will not cause Patroni to demote the leader. |

Patroni Replication Modes

There are two PostgreSQL streaming replication modes, including asynchronous and synchronous. By default Patroni configures PostgreSQL for asynchronous replication. The replication mode you select will depend heavily on your business needs and technology implementation.

Asynchronous Mode Durability

This is Patroni’s default replication mode. The cluster is allowed to lose some transactions to remain highly available. Any non-replicated transactions will be ported to a standby to remain in a “forked timeline” on the primary and will not be recovered. maximum_lag_on_failover controls the amount of transactions can be lost by a standby.

PostgreSQL Synchronous Replication

Synchronous replication confirms that writes have been committed to a secondary before returning to the client with a success confirmation. In general, you will get less throughput on writes with synchronous replication. This can cause issues with Postgres instances running in separate data centers due to network latency. If follower nodes are not accessible by the master, then the master defaults to read-only. It is still possible to lose transactions in this mode. To prevent transaction loss, you can enable synchronous_mode, or synchronous_mode_strict.

Back to topPatroni REST API

The Patroni REST API allows you to perform all of the tasks that you would perform within Patroni via an external API. The REST API can be use by HAProxy, or any other kind of load balancer, such as F5 for performing HTTP health checks. This API could also be used as a monitoring proxy, such as Prometheus. This is worth checking out, because you simply get a huge wealth of data with the Patroni REST API. Finally, note that all data within the Patroni REST API is stored as JSON data.

Back to topFinal Thoughts

As a cluster management tool for automating deployment and management of PostgreSQL high-availability clusters, Patroni can really streamline your Postgres footprint. While getting up and running may take a bit of time, the long-term benefits are well worth it.

Get Implementation Services

Still having trouble understanding how to get Patroni up and running? We offer implementation services that can get get you up and running fast.

Additional Resources

- Blog – What Is PostgreSQL?

- Blog – Exploring the PostgreSQL System Catalogs

- Blog – PostgreSQL Support Options

- Blog – EnterpriseDB vs. PostgreSQL

- Blog – PostgreSQL vs. MongoDB

- Blog – PostgreSQL vs. MySQL

- Resource Collection - Intro to Open Source Databases